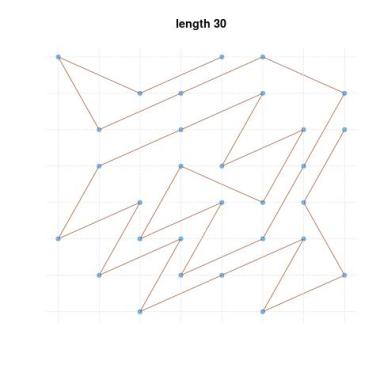

I came across this paper by Feras A. Saad and Wonyeol Lee, to appear in Proc. ACM Program. Lang. It is calling for a finite precision assessment of random numbers generators. Rather than the “fictitious infinite-precision (Real-RAM)” model. With the following illustration

I came across this paper by Feras A. Saad and Wonyeol Lee, to appear in Proc. ACM Program. Lang. It is calling for a finite precision assessment of random numbers generators. Rather than the “fictitious infinite-precision (Real-RAM)” model. With the following illustration

“Mironov (2012) demonstrates that floating-point effects in the Laplace random variate generator from existing software libraries can entirely destroy the real-world privacy guarantees of algorithms”

Their solution is to resort to finite precision computation of the CDF of a target distribution, and then to apply the inverse CDF transform to a chain of random bits. I did not go through any of the technical (gory) details of the implementation, presented as an optimised version of the original Knuth and Yao method, but the author compute the cdf of standard distributions from

“the GNU Scientific Library (GSL) by reusing high-quality CDF implementations. The built-in GSL Gaussian generators often have complex implementations spanning hundreds of lines of code, and each specify different output distributions which are all intractable to estimate. Indeed, any GSL random variate generator that makes just two (or more) calls to uniform is already intractable to analyze”

and claim faster execution times, larger ranges of output, and a minimal overhead for extended-accuracy generators. I wonder if an MCMC study is under production towards handling intractable CDFs.