The astronomer and cosmologist Karim Benabed got killed on Wednesday in Paris. While cycling, run over by a truck-driver (with no further details at the moment). He was a senior research at IAP (Institut d’Astrophysique de Paris) and we actively collaborated together between 2005 and 2010 on an ANR project on efficient simulation methods for inferring cosmological parameters, based on PMC. And Bayesian model comparison. He was a very congenial person, very sharp in assimilating new methods and keen on exploring novel hypotheses. While we did not keep closely in touch, I would meet him now and then while visiting the IAP. Ironically, Darren Wraith, formerly a postdoc with him at IAP, was visiting me last week and we were reminiscing of that era as late as Saturday night over dinner… So sad (and also so absurd, a truck stopping the trajectory of someone managing to travel to the origins of the Universe). The above is a cartoon of him drawn during his cosmic microwave background presentation during the Nuit de l’Astronomie.

Archive for cosmology

Karim Benabed, astrophysician

Posted in Statistics, Travel, University life with tags ABC, adaptive importance sampling, ANR, astronomy, Bayesian inference, Bayesian model comparison, CMB, cosmology, cycling, dark energy, evidence, gravitational lensing, IAP, Institut d'Astrophysique de Paris, Nuit de l'Astronomie, Planck experiment, PMC, population Monte Carlo, traffic accident on December 5, 2025 by xi'anto the early Universe and back

Posted in Books, pictures, Statistics, Travel, University life with tags ABC, Bayesian inference, BOLFI, CMB, cosmology, cosmoPMC, cosmostats, dark matter, Guy Gavriel Kay, habilitation, IAP, Institut d'Astrophysique de Paris, J.R. Tolkien, likelihood-free, likelihood-free inference, model misspecification, Observatoire de Paris, Pierre Simon Laplace, SELFI, Simbelmynë on November 16, 2025 by xi'an

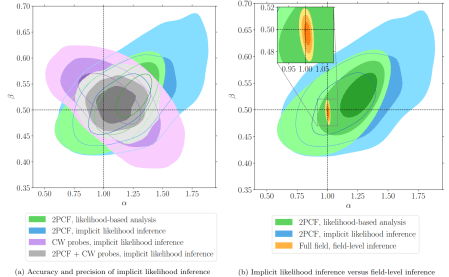

On 28 October, I spent the day at Institut d’Astrophysique de Paris (where I used to work on PMC for cosmology between 2005 and 2009), as a committee member for the habilitation defence of Florent Leclercq. Not only it was nice to be back in this unique institution (with vestiges from Laplace’s era), but this was a fantastic habilitation, with a superb thesis that beautifully gathered the different fields mastered by the candidate in a highly coherent discourse. And could serve as an introduction to cosmostatistics for many.

And provided the background to ten years (post-PhD) of research on forward modelling in cosmology and resulting Bayesian statistical analysis either by implicit likelihood (or likelihood-free) inference or by field-level inference. He describes the Simbelmynë software he developed to produce maps of the density field and analyse dark matter dynamics. Ẁhose name is borrowed from Tolkien (along with a quote from Guy Gavriel Kay!):

“How fair are the bright eyes in the grass! Evermind they are called, simbelmynë in this land of Men, for they blossom in all the seasons of the year, and grow where dead men rest.” — J.R.R. Tolkien, The Lord of the Ring

And the Bayesian computational and modelling tools he elaborated, like SELFI (Simulator expansion for likelihood-free inference, Leclercq et al., 2019), that relates to Michael Gutmann’s and Juka Corander’s BOLFI. (Obviously, I did not get every aspect right from just reading the thesis and attending the lecture, in particular the remarks on using SELFI to assess model misspecification. But I remain impressed by the scope of the work and its likely impact on the field!)

a bad graph about Hubble discrepancies

Posted in Books, pictures, Statistics, Travel, University life with tags bad graph, CMB, cosmology, Hubble constant, Nature Reviews Physics on September 23, 2020 by xi'anHere is a picture seen in a Nature Reviews Physics paper I came across, on the Hubble constant being consistently estimated as large now than previously. I have no informed comment to make on the paper, which thinks that these discrepancies support altering the composition of the Universe shortly before the emergence of the Cosmological Background Noise (CMB), but the way it presented the confidence assessments of the same constant H⁰ based on 13 different experiments is rather ghastly, from using inclined confidence intervals, to adding a USA Today touch to the graph via a broken bridge and a river below, to resorting to different scales for both parts of the bridge…

simulated summary statistics [in the sky]

Posted in Statistics with tags ABC, approximate likelihood, Bayes factor, computer-simulated model, cosmology, cosmostats, de-biasing, urbi et orbi on October 10, 2018 by xi'an Thinking it was related with ABC, although in the end it is not!, I recently read a baffling cosmology paper by Jeffrey and Abdalla. The data d there means an observed (summary) statistic, while the summary statistic is a transform of the parameter, μ(θ), which calibrates the distribution of the data. With nuisance parameters. More intriguing to me is the sentence that the correct likelihood of d is indexed by a simulated version of μ(θ), μ'(θ), rather than by μ(θ). Which seems to assume that the pseudo- or simulated data can be produced for the same value of the parameter as the observed data. The rest of the paper remains incomprehensible for I do not understand how the simulated versions are simulated.

Thinking it was related with ABC, although in the end it is not!, I recently read a baffling cosmology paper by Jeffrey and Abdalla. The data d there means an observed (summary) statistic, while the summary statistic is a transform of the parameter, μ(θ), which calibrates the distribution of the data. With nuisance parameters. More intriguing to me is the sentence that the correct likelihood of d is indexed by a simulated version of μ(θ), μ'(θ), rather than by μ(θ). Which seems to assume that the pseudo- or simulated data can be produced for the same value of the parameter as the observed data. The rest of the paper remains incomprehensible for I do not understand how the simulated versions are simulated.

“…the corrected likelihood is more than a factor of exp(30) more probable than the uncorrected. This is further validation of the corrected likelihood; the model (i.e. the corrected likelihood) shows a better goodness-of-fit.”

The authors further ressort to Bayes factors to compare corrected and uncorrected versions of the likelihoods, which leads (see quote) to picking the corrected version. But are they comparable as such, given that the corrected version involves simulations that are treated as supplementary data? As noted by the authors, the Bayes factor unsurprisingly goes to one as the number M of simulations grows to infinity, as supported by the graph below.

ABC²DE

Posted in Books, Statistics with tags ABC, ABC algorithm, Carnegie Mellon University, CMU, conditional density, cosmology, Edinburgh, FlexCode, IAP, local regression, local scaling, Monte Carlo error, non-parametric kernel estimation, reference table on June 25, 2018 by xi'an A recent arXival on a new version of ABC based on kernel estimators (but one could argue that all ABC versions are based on kernel estimators, one way or another.) In this ABC-CDE version, Izbicki, Lee and Pospisilz [from CMU, hence the picture!] argue that past attempts failed to exploit the full advantages of kernel methods, including the 2016 ABCDE method (from Edinburgh) briefly covered on this blog. (As an aside, CDE stands for conditional density estimation.) They also criticise these attempts at selecting summary statistics and hence failing in sufficiency, which seems a non-issue to me, as already discussed numerous times on the ‘Og. One point of particular interest in the long list of drawbacks found in the paper is the inability to compare several estimates of the posterior density, since this is not directly ingrained in the Bayesian construct. Unless one moves to higher ground by calling for Bayesian non-parametrics within the ABC algorithm, a perspective which I am not aware has been pursued so far…

A recent arXival on a new version of ABC based on kernel estimators (but one could argue that all ABC versions are based on kernel estimators, one way or another.) In this ABC-CDE version, Izbicki, Lee and Pospisilz [from CMU, hence the picture!] argue that past attempts failed to exploit the full advantages of kernel methods, including the 2016 ABCDE method (from Edinburgh) briefly covered on this blog. (As an aside, CDE stands for conditional density estimation.) They also criticise these attempts at selecting summary statistics and hence failing in sufficiency, which seems a non-issue to me, as already discussed numerous times on the ‘Og. One point of particular interest in the long list of drawbacks found in the paper is the inability to compare several estimates of the posterior density, since this is not directly ingrained in the Bayesian construct. Unless one moves to higher ground by calling for Bayesian non-parametrics within the ABC algorithm, a perspective which I am not aware has been pursued so far…

The selling points of ABC-CDE are that (a) the true focus is on estimating a conditional density at the observable x⁰ rather than everywhere. Hence, rejecting simulations from the reference table if the pseudo-observations are too far from x⁰ (which implies using a relevant distance and/or choosing adequate summary statistics). And then creating a conditional density estimator from this subsample (which makes me wonder at a double use of the data).

The specific density estimation approach adopted for this is called FlexCode and relates to an earlier if recent paper from Izbicki and Lee I did not read. As in many other density estimation approaches, they use an orthonormal basis (including wavelets) in low dimension to estimate the marginal of the posterior for one or a few components of the parameter θ. And noticing that the posterior marginal is a weighted average of the terms in the basis, where the weights are the posterior expectations of the functions themselves. All fine! The next step is to compare [posterior] estimators through an integrated squared error loss that does not integrate the prior or posterior and does not tell much about the quality of the approximation for Bayesian inference in my opinion. It is furthermore approximated by a doubly integrated [over parameter and pseudo-observation] squared error loss, using the ABC(ε) sample from the prior predictive. And the approximation error only depends on the regularity of the error, that is the difference between posterior and approximated posterior. Which strikes me as odd, since the Monte Carlo error should take over but does not appear at all. I am thus unclear as to whether or not the convergence results are that relevant. (A difficulty with this paper is the strong dependence on the earlier one as it keeps referencing one version or another of FlexCode. Without reading the original one, I spotted a mention made of the use of random forests for selecting summary statistics of interest, without detailing the difference with our own ABC random forest papers (for both model selection and estimation). For instance, the remark that “nuisance statistics do not affect the performance of FlexCode-RF much” reproduces what we observed with ABC-RF.

The long experiment section always relates to the most standard rejection ABC algorithm, without accounting for the many alternatives produced in the literature (like Li and Fearnhead, 2018. that uses Beaumont et al’s 2002 scheme, along with importance sampling improvements, or ours). In the case of real cosmological data, used twice, I am uncertain of the comparison as I presume the truth is unknown. Furthermore, from having worked on similar data a dozen years ago, it is unclear why ABC is necessary in such context (although I remember us running a test about ABC in the Paris astrophysics institute once).